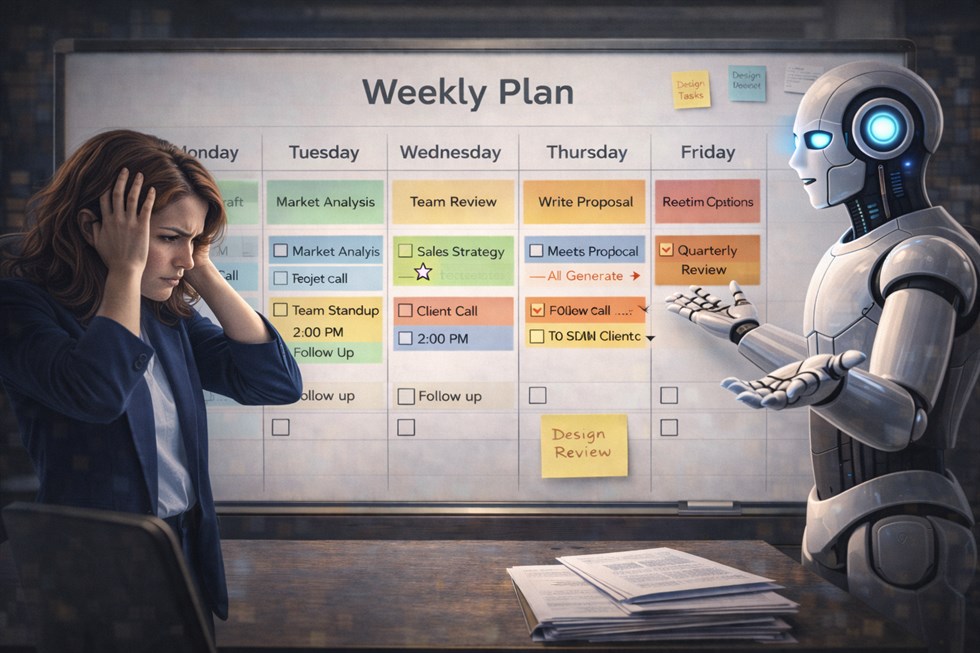

AI-powered daily planning looks like a productivity breakthrough. You describe your tasks, click a button, and receive a neatly structured plan for the day — priorities, time blocks, even suggested breaks. On the surface, this feels efficient, rational, and modern. In real work, however, AI daily planning often produces the opposite effect: overload, blurred priorities, and a dangerous illusion of control.

The core problem is not bad algorithms or weak prompts. The problem is that daily planning is a high-stakes judgment activity. It requires understanding trade-offs, risks, shifting priorities, and accountability — things AI does not truly possess. This article explains why AI daily planning fails so often, shows how it breaks down in real workflows, and demonstrates how to use AI safely without surrendering human responsibility.

Why AI Daily Planning Looks Smart but Breaks Fast

AI is extremely good at organizing visible information. When you provide a task list, AI can cluster items, estimate durations, and arrange them into what appears to be a logical sequence. This visual clarity is persuasive — it feels like progress. But daily work is not just a set of tasks. It is a system of consequences.

AI does not understand what is truly at stake. It sees tasks, not impact. It treats all items as comparable units of work, even when one decision could affect revenue, reputation, or long-term strategy while another is merely administrative. As a result, AI daily planning optimizes for structure, not importance.

Another issue is shifting priorities. In real work, priorities change during the day as new information arrives. AI-generated plans assume stability. They rarely account for interruptions, urgent requests, or decisions that invalidate earlier assumptions. Without ownership, the plan collapses at the first real disruption.

AI daily planning fails because it optimizes structure, not judgment.

This problem mirrors what happens in many automated task systems. As explained in AI Task Planning: Why Most To-Do Systems Break, tools that over-formalize work often reduce clarity instead of increasing it. The same dynamic applies to AI-driven daily schedules.

Real Examples: How AI Daily Plans Fail in Real Work

Consider a knowledge worker who starts the day by asking AI to plan their tasks. They provide a list of 12 items, ranging from “reply to emails” to “prepare strategic presentation for leadership.” The AI returns a plan labeling nearly everything as high priority, evenly distributing time blocks across the day.

An AI-generated daily plan listed 14 tasks as “high priority”, making execution impossible.

The result is paralysis. When everything is critical, nothing is. The worker jumps between tasks, never entering deep focus, and ends the day feeling busy but ineffective.

Another common failure occurs with time estimation. Managers often trust AI-generated schedules that allocate precise durations to meetings, reviews, and decision-making. In practice, these estimates are speculative. AI does not experience fatigue, cognitive load, or negotiation delays. When tasks overrun — which they usually do — the entire plan unravels, creating stress instead of clarity.

Creative professionals face a different but equally damaging pattern. AI tends to over-structure creative days, filling them with micro-tasks, checkpoints, and artificial milestones. This fragmentation blocks flow states and replaces creative judgment with mechanical execution. What looks productive on paper actively sabotages creative output.

The Core Mistake: Delegating Prioritization to AI

The fundamental error behind most AI daily planning problems is delegation. There is a crucial difference between assistance and decision-making. Assistance increases visibility and understanding. Delegation transfers responsibility.

Prioritization is not a computational problem. It is a value judgment under uncertainty. It involves choosing what not to do today, accepting risk, and committing to trade-offs. AI can describe options, but it cannot bear the cost of a wrong choice.

When people allow AI to rank tasks or declare priorities, they often abdicate ownership. If the day goes badly, the failure feels external: “the plan didn’t work.” This erosion of accountability is subtle but dangerous. Over time, it weakens strategic thinking and replaces judgment with compliance.

AI should assist with visibility, not decide what matters today.

This same dynamic explains why uncontrolled AI usage can damage focus. As detailed in Why AI Can Ruin Deep Work (And How to Prevent It), excessive optimization and constant restructuring increase context switching and reduce meaningful progress.

Safe Prompt Structures for Daily Planning (With Human Control)

The examples below are control prompts. They are not meant to replace judgment or automate decisions. Their purpose is to constrain AI behavior during specific workflow steps — helping structure information without introducing assumptions, ownership, or commitments.

Help me review today’s tasks. Do not assign priorities or rank items. Ask clarifying questions about dependencies, risks, and deadlines before suggesting any structure.

This prompt forces AI into a reflective role. Instead of producing a plan, it surfaces uncertainties and missing information, enabling better human decisions.

Group today’s tasks by type and effort level only. Do not recommend what to do first. Do not optimize the schedule. Highlight potential conflicts or overload.

Here, AI acts as a diagnostic tool. It helps reveal overload and friction points without pretending to know what deserves attention today.

Limits and Risks of AI Daily Planning

Even with careful prompts, AI daily planning has structural limitations that cannot be eliminated.

Overconfidence bias is one of the most common risks. AI outputs are often presented with calm certainty, even when based on incomplete or ambiguous inputs. This confidence can mislead users into trusting fragile plans.

Context blindness is another critical issue. AI lacks situational awareness of organizational politics, emotional labor, and external constraints. It cannot sense when a “small” task carries disproportionate risk.

Time estimation hallucinations frequently distort schedules. AI treats time as a neutral resource, ignoring cognitive switching costs and energy depletion.

Finally, accountability erosion occurs when people follow AI-generated plans without active judgment. When outcomes suffer, responsibility becomes diffused.

If AI feels “certain” about your day, something is already wrong.

Final Responsibility: What Only Humans Must Decide

No system, prompt, or model can replace human responsibility in daily planning. Some decisions are inherently human and must remain so.

Only humans can decide which trade-offs are acceptable today. Only humans can choose to delay a task because the risk is too high or the context is wrong. Only humans can determine when strategic thinking matters more than execution.

Perhaps most importantly, only humans can decide what not to do. AI tends to fill space. Human judgment creates space. That difference defines effective work.

AI can support awareness, reflection, and structure. It cannot carry responsibility. Treating it as a planner rather than an assistant is why AI daily planning often fails — and why reclaiming control is essential for real productivity.

FAQ

Why does AI daily planning often fail?

Because AI lacks context, judgment, and the ability to understand real trade-offs.

Can AI plan my workday effectively?

AI can assist with structure, but final prioritization must remain human.

What are the biggest risks of AI productivity tools?

False priorities, overplanning, and loss of accountability.

How should AI be used for daily planning?

As a review and clarification tool, not as a decision-maker.

Is AI daily planning bad for deep work?

Yes, if it increases task switching and over-optimization.