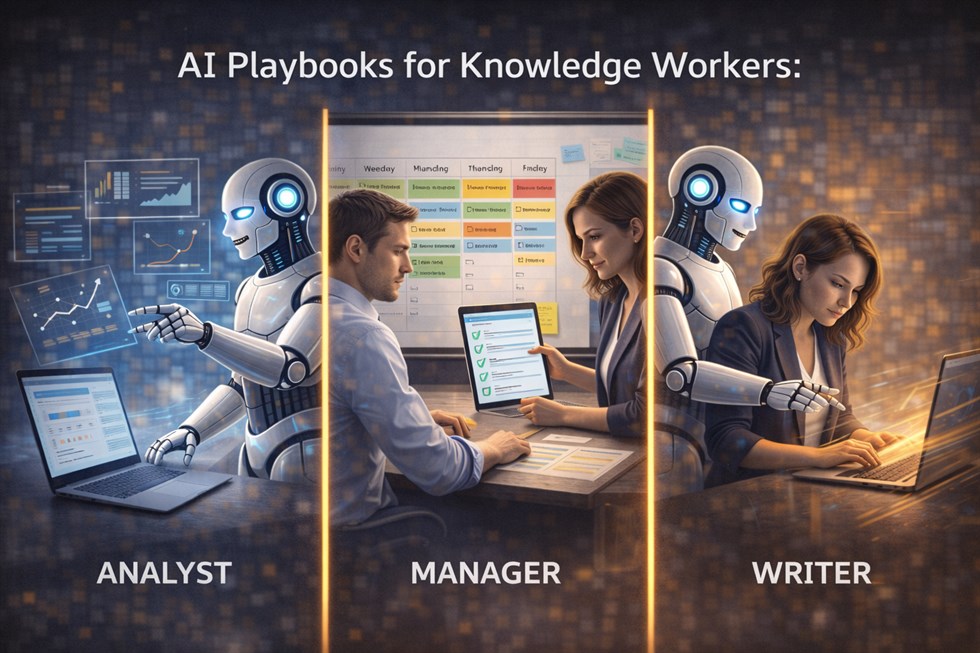

Knowledge work is being rewritten by AI — but not in the way most people expect. AI does not “replace jobs” evenly. It replaces unowned execution first: the work that produces artifacts without clearly owning decisions, risks, or outcomes. That’s why the most useful way to adopt AI is not “learn prompts” or “try new tools,” but to use role-based playbooks: repeatable workflows that define what AI can do, what humans must verify, and where accountability stays.

This matters at work because AI raises the baseline. Many outputs that used to signal competence (summaries, drafts, reports, first-pass analysis) are now easy to generate. Career leverage shifts to people who can frame the right problem, evaluate quality and truth, and own consequences — while using AI to move faster on execution.

An AI playbook defines where AI accelerates work and where humans must retain judgment, accountability, and final responsibility.

What an AI playbook is (and what it is not)

An AI playbook is a practical operating system for a role. It answers: What inputs do we use? What steps does AI handle? Where do humans intervene? What checks prevent silent failure? What is the output standard? A playbook is not a list of cool prompts. It’s a repeatable workflow with clear boundaries and checkpoints.

An AI playbook is:

- Role-specific (analysts, managers, and writers have different responsibilities).

- Outcome-aware (it protects decision quality, not just speed).

- Checkpoint-driven (it forces verification where errors are costly).

- Explainable (a team can follow it consistently, not only one “AI power user”).

An AI playbook is not:

- A prompt collection without process.

- A mandate to “automate everything.”

- A replacement for expertise or judgment.

- A way to avoid responsibility for the output.

Without a playbook, AI usage drifts toward speed-first behavior — often at the cost of quality, trust, and long-term skill growth.

Why AI playbooks must be role-specific

Knowledge workers don’t all “use AI.” They own different kinds of decisions. That’s why one generic approach (e.g., “use AI for everything”) fails. Analysts are judged on correctness and assumptions. Managers are judged on alignment and people impact. Writers are judged on clarity, voice, and credibility. Each role requires a different set of guardrails.

Role-specific playbooks solve three problems:

- They prevent responsibility leakage. AI can draft, but someone must own the consequences.

- They reduce rework. Fast wrong work is slower than careful right work.

- They keep skills compounding. People practice judgment and evaluation instead of outsourcing thinking.

AI also changes how people move from beginner to expert. Juniors can produce acceptable drafts quickly, but senior work becomes more about evaluation, trade-offs, and accountability. That shift is explained in detail here: How AI Changes Skill Progression (Beginner → Expert).

AI changes the skill ladder differently for each role. Playbooks align AI usage with responsibility, not just productivity.

Playbook structure you can reuse for any role

Before we get role-by-role, here is the standard playbook template used in this guide:

- Goal: what the role needs to achieve.

- AI is allowed to: tasks AI can accelerate safely.

- Humans must own: decisions and checks that cannot be delegated.

- Workflow: steps from input → AI → checkpoint → output.

- Failure modes: what typically goes wrong.

- Prompts: control prompts that support the workflow.

Use this as a workflow, not a trick: the purpose is to constrain AI behavior and force human checkpoints where the cost of error is high.

AI Playbook for Analysts

Goal: turn data and information into decisions — with correct assumptions, credible evidence, and clear trade-offs.

AI is allowed to:

- Summarize documents, transcripts, and research notes.

- Generate hypothesis lists and scenario options.

- Create first-pass analysis narratives and slide outlines.

- Suggest metrics, dashboards, and potential drivers.

- Draft SQL queries, spreadsheet formulas, or code scaffolds (with review).

Humans must own:

- Data validity: sources, freshness, completeness, bias, and definitions.

- Assumptions: what is being assumed, what is uncertain, what could break.

- Interpretation: what matters for the business decision (not just what’s “interesting”).

- Risk framing: confidence levels, error bounds, and “what could go wrong.”

- Final recommendation: what to do and why, with evidence.

An analyst uses AI to generate scenarios and summaries, but remains responsible for assumptions, data validity, and conclusions.

Analyst workflow (repeatable)

- Define the decision: What decision will this analysis support? What would change if the answer changes?

- Lock inputs: Paste the source notes, data definitions, time ranges, and constraints.

- AI synthesis step: Ask AI to summarize and propose hypotheses, but require it to separate facts from assumptions.

- Human checkpoint #1 (truth): Verify claims against data and sources. Remove anything uncertain.

- AI structuring step: Ask AI to produce a decision memo outline or slide storyline based only on verified facts.

- Human checkpoint #2 (decision quality): Confirm the recommendation matches the decision context and constraints.

- Output: Publish a memo/slide deck with assumptions, confidence, and next steps.

Common analyst failure modes with AI

- Hallucinated “facts” in market sizes, benchmarks, or citations.

- Hidden assumptions (AI fills gaps silently).

- Overconfident narratives that ignore uncertainty.

- Metric theater: dashboards that look impressive but don’t change decisions.

The examples below are control prompts. They are not meant to replace judgment or automate decisions. Their purpose is to constrain AI behavior during specific workflow steps — helping structure information without introducing assumptions, ownership, or commitments.

Summarize the sources I paste below. Separate (1) verified facts from (2) assumptions from (3) unknowns. If you cannot verify a claim from the text, label it as unknown.

Given the decision context below, propose 5 hypotheses and for each: what data would confirm it, what data would falsify it, and the highest-risk assumption.

Review this draft analysis and list potential errors: misleading metrics, missing confounders, selection bias, and claims that require citation. Output a checklist.

Interpretation guide for checklists: treat “Yes” as permission to proceed, “No” as a stop signal that requires clarification, verification, or a human decision. If several items are “No,” narrow scope and reduce risk before continuing.

AI Playbook for Managers

Goal: drive outcomes through people — alignment, priorities, communication, execution quality, and decision accountability.

AI is allowed to:

- Draft updates, agendas, meeting notes, and follow-ups.

- Generate options for plans, roadmaps, and trade-offs (as inputs, not decisions).

- Summarize cross-functional discussions and highlight open questions.

- Prepare 1:1 templates and coaching questions.

- Draft performance feedback phrasing (with human review and ethics).

Humans must own:

- Prioritization: what matters most and why (trade-offs are a manager’s job).

- People impact: tone, fairness, context, and organizational trust.

- Decision accountability: escalation, risk acceptance, and final calls.

- Reality checks: feasibility, capacity, dependencies, and incentives.

A manager uses AI to draft updates and plans but personally validates priorities, tone, and people impact.

Manager workflow (repeatable)

- Clarify intent: Is this communication, alignment, coaching, or decision-making?

- Provide context: audience, sensitivities, constraints, and what must not be implied.

- AI drafting step: generate 2–3 versions (short, medium, direct) with clear structure.

- Human checkpoint #1 (tone & truth): remove unsafe claims, adjust tone, confirm facts.

- AI refinement step: tighten for clarity, reduce ambiguity, add action items.

- Human checkpoint #2 (accountability): confirm you are willing to stand behind the message and its consequences.

- Send / publish: and track follow-through.

Common manager failure modes with AI

- Outsourcing judgment (letting AI “decide” priorities or performance conclusions).

- Trust damage from generic, unnatural communication.

- Policy and HR risk from poorly phrased feedback or sensitive topics.

- Misalignment because AI drafts can sound confident while being unclear.

The examples below are control prompts. They are not meant to replace judgment or automate decisions. Their purpose is to constrain AI behavior during specific workflow steps — helping structure information without introducing assumptions, ownership, or commitments.

Draft a team update based on the notes below. Then highlight which parts require manager validation before sending (facts, commitments, trade-offs, or sensitive wording).

Turn this messy discussion into: (1) decisions made, (2) open questions, (3) owners, (4) deadlines. Do not invent missing details; label unknowns explicitly.

Help me prepare for a difficult 1:1. Ask clarifying questions first, then draft a conversation plan with empathy, boundaries, and specific examples. Avoid HR/legal claims.

AI Playbook for Writers

Goal: produce clear, persuasive, credible writing that maintains voice and earns trust — while using AI to accelerate drafting and editing without becoming generic.

AI is allowed to:

- Create outlines, angle options, and section structures.

- Generate first drafts for paragraphs (with constraints).

- Rewrite for clarity, concision, and readability.

- Suggest alternative headlines and intros.

- Produce checklists for fact verification and consistency.

Humans must own:

- Voice and point of view: what the piece is really saying.

- Argument integrity: claims, logic, and evidence.

- Factual accuracy: sources, citations, and uncertainty labeling.

- Ethics and trust: what readers might misunderstand or misuse.

A writer uses AI for structure and drafts, but retains control over voice, argument, and factual integrity.

Writer workflow (repeatable)

- Define the reader and promise: who this is for, what they get, what it is not.

- Lock constraints: tone, structure, banned claims, required sections, and internal links.

- AI outlining step: generate 2–3 outline options and choose one.

- AI drafting step: draft section-by-section with explicit “do not invent facts” constraints.

- Human checkpoint #1 (voice): rewrite key lines so it sounds like you.

- Human checkpoint #2 (truth): verify claims; remove or qualify uncertain statements.

- AI polishing step: improve clarity and flow without changing meaning.

- Final pass: consistency, structure, and reader action.

Common writer failure modes with AI

- Voice dilution: content becomes bland, generic, and “AI-sounding.”

- Fake specificity: invented numbers, studies, or citations.

- Overproduction: more content, less clarity, weaker trust.

- Argument drift: AI subtly changes the meaning while “improving” style.

The examples below are control prompts. They are not meant to replace judgment or automate decisions. Their purpose is to constrain AI behavior during specific workflow steps — helping structure information without introducing assumptions, ownership, or commitments.

Create 3 outline options for this topic. For each option, write the reader promise and the main argument. Avoid generic claims. Ask me 5 clarifying questions before drafting.

Rewrite this draft to improve clarity while preserving my voice. Do not add facts. If you see an unsupported claim, flag it and suggest how to verify it.

Turn this article into a fact-check checklist: list each claim that needs verification, what source type would confirm it, and how risky it is if wrong.

Interpretation guide for checklists: treat “Yes” as permission to proceed, “No” as a stop signal that requires clarification, verification, or a human decision. If several items are “No,” narrow scope and reduce risk before continuing.

Limits and risks of AI playbooks (what can still go wrong)

Playbooks reduce risk, but they do not eliminate it. The most common failures happen when organizations treat AI as a shortcut to avoid thinking rather than a tool to increase leverage.

Risk 1: Speed replaces quality control

If teams skip checkpoints, AI increases output while quietly increasing error rates. In the short term it looks productive; in the long term it creates rework, distrust, and decision debt.

Risk 2: Responsibility leakage

AI output can “feel” authoritative. Teams may accept it without clarity on who owns the decision. This is how mistakes become organizational — because no one stopped them.

Risk 3: Skill atrophy

When AI is used to skip hard thinking steps (scoping, reasoning, checking), people get faster but weaker. Over time, expertise declines and dependence increases.

Risk 4: Sensitive information and compliance

Many roles handle confidential data. A playbook must define what cannot be shared with AI, what requires anonymization, and what tools are approved.

AI playbooks fail when they optimize for speed and ignore accountability, context, and verification.

Final human responsibility: what AI cannot do for any role

Across analysts, managers, and writers, the non-negotiable is the same: humans own outcomes. AI can generate drafts and options, but it cannot reliably own:

- the correctness of claims,

- the ethical and reputational consequences,

- trade-offs under constraints,

- people impact and trust,

- accountability when things go wrong.

The best AI playbooks protect exactly that: they keep humans in the loop where judgment matters and push AI into roles where speed and structure help. If a playbook removes human accountability, it is not a playbook — it is a risk generator.

The strongest AI playbooks preserve human responsibility while expanding leverage — not the other way around.

FAQ

What is an AI playbook for knowledge work?

An AI playbook is a role-specific workflow that defines how AI is used, where humans intervene, and who remains accountable for the outcome.

Why do analysts, managers, and writers need different AI workflows?

Because each role owns different decisions and risks. Analysts must validate assumptions, managers must manage people impact, and writers must protect voice and credibility.

Can AI playbooks be reused across teams?

Yes, but they must be adapted to context: data sensitivity, compliance rules, output standards, and the specific decisions the team is responsible for.

Do AI playbooks reduce the need for expertise?

No. They raise the value of expertise by shifting work from producing drafts to evaluating truth, quality, and consequences.

What are the biggest risks of using AI at work without a playbook?

Common risks include hallucinated facts, unclear ownership, trust damage from generic outputs, compliance violations, and long-term skill atrophy.

How should a beginner use these playbooks differently than an expert?

Beginners should use stricter checkpoints and avoid delegating decisions. Experts can move faster because they can evaluate and correct AI output more reliably.